Last Friday the endpoint security company CrowdStrike had a little oopsie:

On July 19, 2024 at 04:09 UTC, as part of ongoing operations, CrowdStrike released a sensor configuration update to Windows systems. Sensor configuration updates are an ongoing part of the protection mechanisms of the Falcon platform. This configuration update triggered a logic error resulting in a system crash and blue screen (BSOD) on impacted systems.

The sensor configuration update that caused the system crash was remediated on Friday, July 19, 2024 05:27 UTC.

The way you may have learned about this boring-sounding but oh-so-impactful event is when you arrived at the airport for your morning flight and found all of the departure boards at the airport blue-screening. Or perhaps you went to buy a latte for breakfast and the coffee shop’s point-of-sale machine didn’t work. Or you turned on the morning news and heard about the “Microsoft outage” or the “global IT outage”.

So, uh, what happened?

The problem

Channel file - kernel extension reads it. Bad update pushed.

CrowdStrike has a product named “Falcon” which is endpoint detection and response (EDR) software. Think “really powerful anti-virus”. New threats are always emerging so Falcon needs to be updated multiple times per day. Part of these updates are “channel” files published by CrowdStrike.

A computer’s operating system is composed of multiple layers of software. Some software runs at a very low-level, doing stuff like talking to your CPU, RAM, and hard disks – this is the kernel. Other software runs higher in the stack and communicates with all the important parts of the computer by talking to the lower layers such as the kernel – this software runs in “user space”.

When something goes wrong in the kernel, bad stuff can happen and the entire operating system can crash. When bad stuff happens in user space that’s no fun for the user, but the OS continues chugging along because the kernel keeps running. Consider your car. It has an infotainment system (aka a radio) and it has some software that runs the engine. If the infotainment system crashes you wouldn’t expect the engine to stop working! Same thing with code running in user space; it doesn’t bork the kernel when it crashes and the machine keeps on humming.

CrowdStrike’s Falcon software runs in the kernel1, so you can see where this is going to go wrong.2

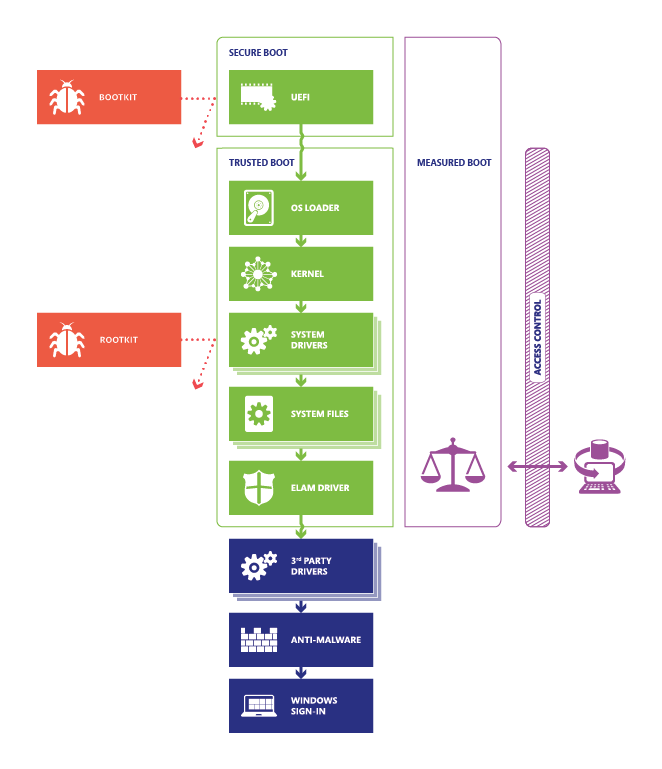

Here’s a nice image from Microsoft showing how the computer boots up:

Secure Boot, Trusted Boot, and Measured Boot block malware at every stage

Running Falcon’s drivers in the kernel makes any mistake incredibly dangerous because if the kernel crashes then the entire computer crashes.

Falcon reads these “channel” files into its kernel driver. And when it gets a bad file, that kernel driver crashes and brings the whole kernel down along with it. The “channel” file was pushed in real-time to a bunch of running systems; they did not even need to reboot to be impacted! But once they got the file, they crashed and rebooted… at which point Falcon tried to load again during boot (very early on, per the above diagram) and it would crash the kernel and reboot the system. On and on it went.

Oops!

Can we fix this quickly?

No.

The immediate crash-on-boot symptom makes it difficult to create a readily deployable fix for this issue that can be automated and run. Many machines were crashing before networking came alive. The bad update was pushed while the machine was running and had a network connection over which to receive any sort of update. Therefore, CrowdStrike could not remotely push out a second update fixing their mistake because the machines they had to fix could not communicate over the network. Unfortunately, there was nothing in Windows which checked for “hey this same kernel driver has crashed 5 times in a row, maybe we should try booting this next time without it…”

Oops again!

The owners of these computers had to manually access each one, boot it up in a special way (Safe Mode), remove the faulty CrowdStrike-pushed file, and reboot.3 Doing this machine-by-machine will take a while considering some organizations have 350,000-plus impacted systems.

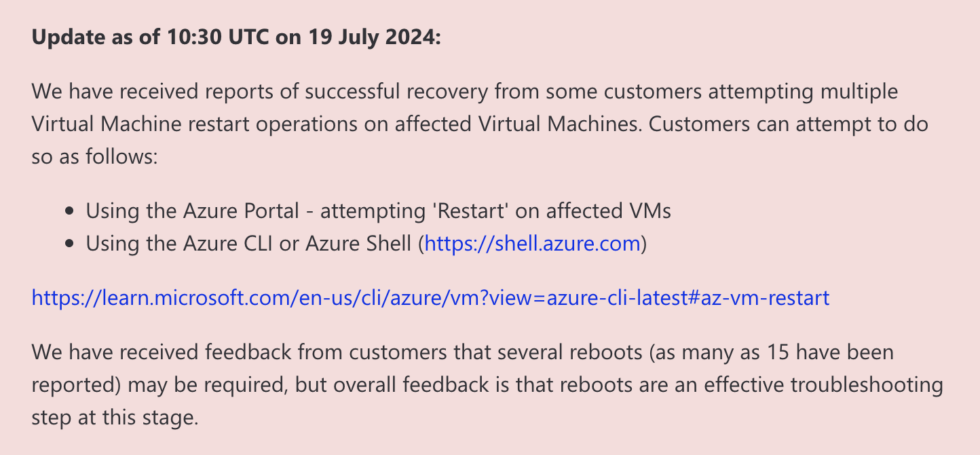

Another “solution”, and this spawned thousands of hilarious memes, was published by Microsoft itself recommending rebooting machines up to 15 times! Sure, they soften the blow by saying “reported” in there, but this was text written by Microsoft and published on an official channel.

As published on azure.status.microsoft/en-us/status. Why is .microsoft a top-level domain? Who knows.

Have you tried turning it off and on again?

30 July 2024: Perhaps a late night snack will motivate those IT folks to really get their butts in gear and fix those PC’s busted by CrowdStrike’s terrible update, though! Seriously, CrowdStrike sent impacted orgs a $10 UberEat’s gift card. For those who slept on this deal and attempted to redeem it later they came to find that Uber cancelled most of those gift card codes because they looked like fraud. Amazing response here. No notes.

The impact

The Verge has some good roundups of impacted organizations:

- Most airlines (

although hilariously, not SouthWest because their PC OS was too old to use CrowdStrike in the first place30 July 2024: unfortunately the humor is misplaced as there’s nothing to back this up) - Most airports

- HomeDepot and REI (probably more, but these are ones I saw pictures of)

- Times Square (!!) in New York City

- A supermarket chain in Australia

- Chanel stores

- Amtrak

- Sky News

Pretty much anyone running Windows in production for some business-critical infrastructure. Which is effectively every business and some local and state governments.

Some businesses got back on their feet quickly, albeit using pen and paper or falling back to a different set of computer systems. Business continuity planners the world over rejoice! Others, like airlines, continue to cancel thousands of flights per day – even today, Tuesday, several days after the initial problem occurred.

24 Jul 2024 EDIT: As of today insurance companies are estimating a $5.4 billion price tag for all of this downtime and recovery. The cost of paying your IT staff to work overtime to fix all those broken PC’s sure does add up! Plus operational losses and missed sales from all the downtime.

What does it mean?

Given the blast radius of ~8.5 million Microsoft Windows PC’s, it’s a good time to reflect on how we got here. A lot of great commentary already exists:

- Kevin Beaumont writing on his blog DoublePulsar

- Ben Thompson writing at Stratechery

- Nick Heer writing on his blog Pixel Envy

- Defensive Security Podcast Ep. 273 with Jerry Bell former CISO at IBM

I write this blog to memorialize interesting moments in history I would one day like to look back upon. This feels like one of those moments.

This CrowdStrike failure is probably the biggest computer system outage to date. I wanted to drop a line here to remember it in the future. A future, I suspect, where this will not end up being the high-water mark of computer system outages that greatly impact daily life.

My takeaway today is that if you build a system which runs right at the heart of critical infrastructure, that should be done thoughtfully, and carefully, and with a good amount of oversight and testing. We don’t have the complete story yet of how this update was able to make it from CrowdStrike’s servers onto these millions of machines, but we do know it won’t be the fault of a single person who wrote a bad channel file. All failure is inherently multi-faceted.

- There’s a management structure at the company who hired the individuals who wrote the code and who approved the plans for testing and release and defined the company culture about how software is built, tested, and deployed.

- There’s all of the businesses who chose CrowdStrike and installed it onto their computers.

- There’s Microsoft who allowed such deep access to the kernel of it’s operating system.

- There’s the businesses who chose Windows over MacOS and Linux.

There’s no single source of this problem, because a different choice across a million variables along the way could have prevented this outcome.

As some saying goes “a problem is just opportunity in disguise.” What opportunity is being presented here and what will change in a year, or five? I’m guessing “not much” but when I revisit this post in the future I’ll be happy to be proven wrong.

Enjoy this delightfully sad video of IT folks fixing things:

CrowdStrike was using an ELAM driver which loads as early as possible in the boot sequence, as explained in this Mastodon post by Matt Taggart. ↩︎

The “why” behind Falcon running in the kernel is complex and nuanced and not something I can clearly articulate. A simple explanation would be that the deeper into the stack the anti-virus can run, the deeper into the stack it can defend against problems. If you only run in user space and the malware finds a way to infect the kernel… welp. ↩︎

And for machines with encrypted disks via BitLocker, each machine has it’s own BitLocker key which has to be manually input during the boot sequence. Making the recovery process more difficult and time-consuming. ↩︎